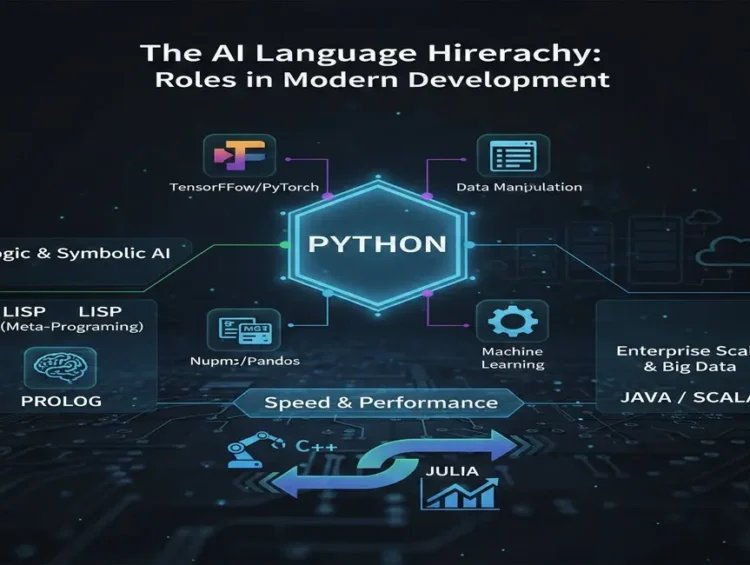

Must-Know Insights: Artificial Intelligence Coding Languages — The Ultimate 2025 Guide to Python, Lisp, and the Best AI/ML Development Tools

1. Introduction: The Critical Junction of Artificial Intelligence Coding Languages

Artificial Intelligence (AI) is the defining technology of the 21st century. From personalized recommendation engines to advanced robotic control and complex medical diagnostics, AI is transforming industries globally. Yet, the prowess of AI models—their speed, accuracy, and scalability—is inextricably linked to the underlying Artificial Intelligence Coding Languages used for their development.

The choice of a programming language in an AI project is not merely a technical formality; it is a strategic decision that dictates the project’s performance, computational efficiency, maintenance overhead, and long-term viability. This ultimate guide is designed to serve as a deep educational resource, dissecting the landscape of Artificial Intelligence Coding Languages—from the dominance of Python to the historical significance of Lisp and the emerging potential of niche competitors like Julia. Our goal is to equip you with the detailed knowledge necessary to select the perfect tool for any AI or Machine Learning (ML) endeavor.

2. The Foundational Role and Technical Imperatives of AI Languages

Unlike conventional software, AI systems deal primarily with massive datasets, complex mathematical operations (especially linear algebra), and iterative learning processes. Consequently, the suitability of a programming language for AI is measured by its capacity to handle these unique demands efficiently.

2.1. Key Technical Criteria for Selecting Artificial Intelligence Coding Languages

When evaluating any potential Artificial Intelligence Coding Languages, developers must consider these crucial technical factors:

Library and Framework Maturity: The availability of well-tested, high-performance libraries (like TensorFlow, PyTorch, NumPy) for mathematical operations, data manipulation, and model training is paramount. A lack of robust libraries necessitates time-consuming custom development.

Computational Speed (Execution Time): ML models, particularly Deep Learning (DL) models, require billions of calculations. The language must either be inherently fast (compiled) or provide efficient mechanisms (like C/C++ bindings) to offload heavy lifting to accelerated hardware (GPUs/TPUs).

Concurrency and Parallelism: Modern AI relies heavily on parallel processing across multiple CPU cores or specialized accelerators. The language’s ability to manage threads and distributed computing seamlessly is a major asset.

Community and Documentation: An active global developer community provides rapid troubleshooting, continuous updates to frameworks, and extensive documentation, which is vital in the fast-evolving AI domain.

3. Python: The Reigning King of Machine Learning and Deep Learning

Python’s ubiquity in data science and AI is a testament to its elegant simplicity and the strength of its ecosystem. While it is an interpreted language, its profound network of scientific libraries makes it the leading choice among all Artificial Intelligence Coding Languages.

3.1. The Unmatched Ecosystem: Scientific Stack and Data Manipulation

Python’s scientific stack provides an unparalleled toolset for the entire AI workflow:

NumPy (Numerical Python): This is the bedrock. It provides efficient array objects and fundamental routines for scientific computing. All major ML libraries rely on NumPy arrays as their primary data structure, making fast matrix and vector operations accessible.

Educational Insight: NumPy’s efficiency comes from its underlying implementation in C, effectively mitigating Python’s inherent slowness for numerical tasks.

Pandas: Built on top of NumPy, Pandas provides the indispensable

DataFramestructure, making data cleaning, manipulation, alignment, and analysis intuitive. For any data scientist, Pandas is the go-to tool for pre-processing raw data.Scikit-learn: This library is the gold standard for classical Machine Learning (Clustering, Classification, Regression). It features a consistent API that allows developers to quickly prototype models and benchmark different algorithms.

3.2. The Deep Learning Giants: TensorFlow and PyTorch

The dominance of Python is cemented by the industry’s two leading Deep Learning frameworks:

TensorFlow (Backed by Google): Known for its robustness in production environments and massive scalability for distributed training. It traditionally uses a static computation graph, which is highly beneficial for deployment across various platforms (mobile, web). The Keras API, now integrated, makes model building highly accessible.

PyTorch (Backed by Meta/Facebook): Extremely popular within the academic research community due to its dynamic computation graph. This “eager execution” approach simplifies debugging, enhances flexibility, and provides a development experience much closer to native Python coding.

Real-World Example: [Insert a conceptual image/diagram showing a seamless AI workflow using Python: Data Ingestion (Pandas) -> Model Training (PyTorch) -> Evaluation (Scikit-learn) -> Deployment (TensorFlow/Python APIs)]

In an enterprise environment, a model for predicting equipment failure might use Python to leverage its rich NLP libraries (NLTK, spaCy) for processing maintenance logs, train the core model using PyTorch, and then deploy the final model with a high-speed Python web framework like FastAPI.

4. C++ and Java: Performance, System Control, and Enterprise Backbone

While Python is used for rapid prototyping and training, C++ and Java take precedence when execution speed, memory control, and integration into large-scale enterprise infrastructure are the primary concerns. These languages bridge the gap between AI research and mission-critical applications.

4.1. C++: The Pursuit of Microsecond Precision

C++ stands out among Artificial Intelligence Coding Languages for its low-level memory management and compiled nature, offering unmatched speed and efficiency.

Robotics and Embedded Systems: In robotics (like those utilizing ROS), autonomous vehicles, and drones, milliseconds matter. C++ ensures the lowest possible latency for real-time sensor processing and decision-making.

Performance-Critical Inference: When an already-trained model needs to process millions of requests per second on a production server (inference), C++ APIs for DL frameworks (e.g., ONNX Runtime or C++ native TensorFlow Serving) are used to maximize throughput.

Library Optimization: As discussed, the core computational kernels of almost all high-performance AI libraries are written in C or C++. Knowledge of C++ is invaluable for those looking to contribute to or optimize these foundational components.

4.2. Java and Scala (JVM): Scaling the Enterprise AI Stack

The Java Virtual Machine (JVM) ecosystem is the backbone of many Fortune 500 companies, making Java and its cousin Scala essential Artificial Intelligence Coding Languages for enterprise integration.

Distributed Computing: The JVM ecosystem is essential for Big Data processing. Frameworks like Apache Hadoop, Apache Spark, and Flink are written in Java/Scala. When training AI models on petabytes of data, leveraging Spark’s MLlib (Machine Learning Library) is often the fastest path.

Deeplearning4j (DL4J): This is a powerful, open-source deep learning library for the JVM designed to integrate seamlessly into existing Java enterprise workflows. It is particularly valued in finance, healthcare, and IT for production-level AI deployment.

5. Lisp and Prolog: The Pillars of Symbolic and Logical AI

Before the rise of statistical Machine Learning, early AI research focused on symbolic reasoning, problem-solving, and logical inference. Lisp and Prolog are the legacy Artificial Intelligence Coding Languages of this era, and they retain niche relevance today.

5.1. Lisp: Flexibility Through Homoiconicity

Lisp, created by John McCarthy, is distinguished by its radical design principles:

Homoiconicity: This is Lisp’s superpower. Code is represented as data (S-expressions), allowing Lisp programs to write and modify other Lisp programs at runtime. This meta-programming capability makes it incredibly flexible for creating domain-specific languages and sophisticated experimental AI systems.

Dynamic and Interactive Development: Lisp encourages an exploratory style of programming, where developers can modify the program while it is running, which is ideal for research-oriented AI projects where requirements are constantly evolving.

Current Relevance: While not mainstream, Lisp derivatives (like Clojure) are used in areas requiring dynamic rule engines and sophisticated expert systems where the logic structure frequently changes.

5.2. Prolog: Programming by Logic

Prolog (Programming in Logic) introduced the paradigm of declarative programming to AI. Instead of telling the computer how to solve a problem, you define the facts and rules, and the language’s inference engine deduces the answers.

Declarative vs. Imperative:

Imperative (Python): Define steps to achieve a result.

Declarative (Prolog): Define the result’s properties; the system figures out the steps.

Expert Systems: Prolog remains the ideal choice for creating expert systems (e.g., medical diagnostic tools like MYCIN) and natural language processing tasks that require robust semantic analysis based on defined linguistic rules.

Example: Defining relationships (e.g.,

parent(X, Y)) and rules (e.g.,ancestor(X, Z) :- parent(X, Y), ancestor(Y, Z)). The programmer queries the system, and Prolog searches the knowledge base to find the valid outcomes.

6. Emerging and High-Performance Artificial Intelligence Coding Languages

The demand for speed and seamless integration into scientific workflows has spurred the creation of new Artificial Intelligence Coding Languages that directly challenge Python’s performance limitations.

6.1. Julia: The Need for Speed in Scientific Computing

Julia was specifically designed for numerical and scientific computing, aiming to eliminate the “two-language problem” (where slow Python code must be rewritten in C++ for production).

JIT Compilation: Julia uses a Just-in-Time (JIT) compiler, allowing it to achieve performance comparable to C/Fortran for loops and mathematical operations, without sacrificing the expressive syntax of Python.

Native Parallelism: Julia offers built-in support for parallelism and distributed computation, which is vital for modern, data-intensive ML training and execution.

Application Niche: Highly valued in computational finance, climate modeling, and large-scale optimization problems where raw computation speed is a critical requirement.

6.2. R: The Statistical Powerhouse

While often grouped with Python, R maintains its own domain in statistical rigor and data visualization.

Statistical Modeling Depth: R offers the deepest array of packages for statistical hypothesis testing, time-series analysis, and traditional econometrics, making it the primary choice for academic and high-level statistical analysis supporting AI projects.

Data Visualization Excellence: R’s

ggplot2library is globally renowned for producing publication-quality, aesthetically sophisticated statistical graphics.Integration: It often serves as the final step in an ML pipeline, where the results of a Python-trained model are passed to R for advanced statistical validation and comprehensive reporting.

7. Strategic Selection: Building Your Polyglot AI Toolkit

Choosing the right Artificial Intelligence Coding Languages means adopting a polyglot (multi-language) approach. The optimal language depends entirely on the specific phase of the project:

| Project Phase | Primary Goal | Recommended Language Set | Rationale |

| I. Research & Prototyping | Rapid Iteration, Exploration | Python, R | Unmatched library support (TensorFlow, PyTorch) and easy syntax for quick experimentation. |

| II. Big Data Pre-processing | Scalability, Distributed Computing | Java/Scala | Native integration with Apache Spark/Hadoop infrastructure for handling massive data volumes. |

| III. High-Speed Deployment | Low Latency, Real-Time Inference | C++, Julia | Compiled execution for maximizing throughput and minimizing the time between request and response. |

| IV. Domain-Specific Systems | Knowledge Representation, Logic | Prolog, Lisp | Ideal for expert systems and complex rule-based decision architectures that require deductive reasoning. |

8. Conclusion: The Future of Artificial Intelligence Coding Languages

The domain of Artificial Intelligence Coding Languages is complex and dynamic. Python’s position as the primary language for development and research is secure due to its mature ecosystem and accessibility. However, the future points toward specialized polyglot development: leveraging C++ or Julia for speed where necessary, Java/Scala for corporate integration, and Python for the connective tissue and initial training phase.

Your journey into AI development demands not just proficiency in one language, but a comprehensive understanding of why each language excels in its specific niche. By mastering the strategic choice among these powerful Artificial Intelligence Coding Languages, you are fully equipped to build the next generation of intelligent systems that will define 2025 and beyond.

20 Essential AI Tools for Content Creation: The Ultimate Guide to Scaling Quality